lale.lib.aif360 package¶

Submodules¶

- lale.lib.aif360.adversarial_debiasing module

- lale.lib.aif360.bagging_orbis_classifier module

- lale.lib.aif360.calibrated_eq_odds_postprocessing module

- lale.lib.aif360.datasets module

fetch_adult_df()fetch_bank_df()fetch_compas_df()fetch_compas_violent_df()fetch_creditg_df()fetch_default_credit_df()fetch_heart_disease_df()fetch_law_school_df()fetch_meps_panel19_fy2015_df()fetch_meps_panel20_fy2015_df()fetch_meps_panel21_fy2016_df()fetch_nlsy_df()fetch_nursery_df()fetch_ricci_df()fetch_speeddating_df()fetch_student_math_df()fetch_student_por_df()fetch_tae_df()fetch_titanic_df()fetch_us_crime_df()

- lale.lib.aif360.disparate_impact_remover module

- lale.lib.aif360.eq_odds_postprocessing module

- lale.lib.aif360.gerry_fair_classifier module

- lale.lib.aif360.lfr module

- lale.lib.aif360.meta_fair_classifier module

- lale.lib.aif360.optim_preproc module

- lale.lib.aif360.orbis module

- lale.lib.aif360.prejudice_remover module

- lale.lib.aif360.protected_attributes_encoder module

- lale.lib.aif360.redacting module

- lale.lib.aif360.reject_option_classification module

- lale.lib.aif360.reweighing module

- lale.lib.aif360.util module

FairStratifiedKFoldaccuracy_and_disparate_impact()average_odds_difference()balanced_accuracy_and_disparate_impact()count_fairness_groups()dataset_to_pandas()disparate_impact()equal_opportunity_difference()f1_and_disparate_impact()fair_stratified_train_test_split()r2_and_disparate_impact()statistical_parity_difference()symmetric_disparate_impact()theil_index()

Module contents¶

Scikit-learn compatible wrappers for several operators and metrics from AIF360 along with schemas to enable hyperparameter tuning, as well as functions for fetching fairness dataset.

All operators and metrics in the Lale wrappers for AIF360 take two arguments, favorable_labels and protected_attributes, collectively referred to as fairness info. For example, the following code indicates that the reference group comprises male values in the personal_status attribute as well as values from 26 to 1000 in the age attribute.

creditg_fairness_info = {

"favorable_labels": ["good"],

"protected_attributes": [

{

"feature": "personal_status",

"reference_group": [

"male div/sep", "male mar/wid", "male single",

],

},

{"feature": "age", "reference_group": [[26, 1000]]},

],

}

See the following notebooks for more detailed examples:

Pre-Estimator Mitigation Operators:¶

In-Estimator Mitigation Operators:¶

Post-Estimator Mitigation Operators:¶

Datasets:¶

Metrics:¶

Other Classes and Operators:¶

Other Functions:¶

Mitigator Patterns:¶

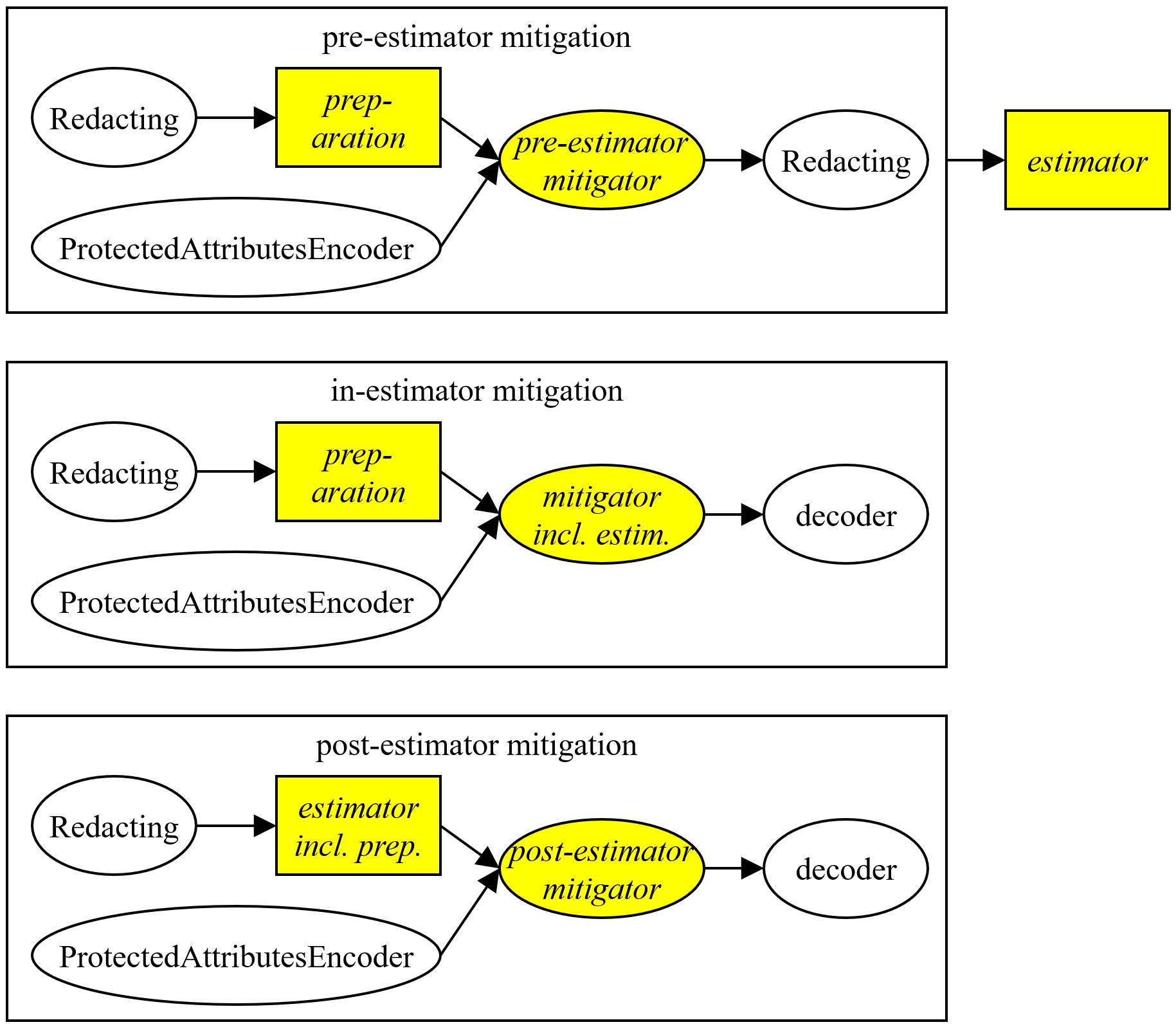

AIF360 provides three kinds of fairness mitigators, illustrated in the following picture. Pre-estimator mitigators transform the data before it gets to an estimator; in-estimator mitigators include their own estimator; and post-estimator mitigators transform predictions after those come back from an estimator.

In the picture, italics indicate parameters of the pattern. For example, consider the following code:

pipeline = LFR(

**fairness_info,

preparation=(

(Project(columns={"type": "string"}) >> OneHotEncoder(handle_unknown="ignore"))

& Project(columns={"type": "number"})

)

>> ConcatFeatures

) >> LogisticRegression(max_iter=1000)

In this example, the mitigator is LFR (which is pre-estimator), the estimator is LogisticRegression, and the preparation is a sub-pipeline that one-hot-encodes strings. If all features of the data are numerical, then the preparation can be omitted. Internally, the LFR higher-order operator uses two auxiliary operators, Redacting and ProtectedAttributesEncoder. Redacting sets protected attributes to a constant to prevent them from directly influencing fairness-agnostic data preparation or estimators. And the ProtectedAttributesEncoder encodes protected attributes and labels as zero or one to simplify the task for the mitigator.